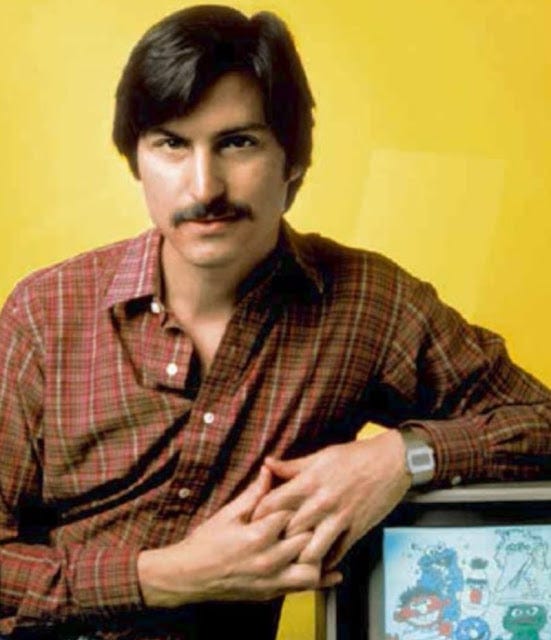

Steve Jobs, next to a PC making a homage to Charles Thacker’s Xerox Alto demo & Jim Henson

from: The early history of Small Talk (01/1996): https://dl.acm.org/doi/10.1145/234286.1057828 p.24 (534 Chapter XI)

Beginning an essay half-way down a page after a continuous juxtaposition of excerpts may have turned you off, but my job as volunteer historian is to follow my intuition, as Steve Jobs said in his 2005 Stanford Commencement Speech:

“And much of what I stumbled into by following my curiosity and intuition turned out to be priceless later on. Let me give you one example:

Reed College at that time offered perhaps the best calligraphy instruction in the country. Throughout the campus every poster, every label on every drawer, was beautifully hand calligraphed. Because I had dropped out and didn’t have to take the normal classes, I decided to take a calligraphy class to learn how to do this. I learned about serif and sans serif typefaces, about varying the amount of space between different letter combinations, about what makes great typography great. It was beautiful, historical, artistically subtle in a way that science can’t capture, and I found it fascinating.

None of this had even a hope of any practical application in my life. But 10 years later, when we were designing the first Macintosh computer, it all came back to me. And we designed it all into the Mac. It was the first computer with beautiful typography. If I had never dropped in on that single course in college, the Mac would have never had multiple typefaces or proportionally spaced fonts. And since Windows just copied the Mac, it’s likely that no personal computer would have them. If I had never dropped out, I would have never dropped in on this calligraphy class, and personal computers might not have the wonderful typography that they do. Of course it was impossible to connect the dots looking forward when I was in college. But it was very, very clear looking backward 10 years later.

Again, you can’t connect the dots looking forward; you can only connect them looking backward. So you have to trust that the dots will somehow connect in your future. You have to trust in something — your gut, destiny, life, karma, whatever. This approach has never let me down, and it has made all the difference in my life.”

It is certain that some people leave Easter eggs. Others leave cookie crumbs. Some might leave an open secret. Others might leave no secret at all- something left intentionally in plain sight. And some might leave no discernible trace.

“Your time is limited, so don’t waste it living someone else’s life. Don’t be trapped by dogma — which is living with the results of other people’s thinking. Don’t let the noise of others’ opinions drown out your own inner voice. And most important, have the courage to follow your heart and intuition. They somehow already know what you truly want to become. Everything else is secondary.”

Intuition is sometimes mistaken for superstition, but it’s largely a product of practice and experience. It might not be able to predict superstring theory a continent away, but it can anticipate certain trends or futures.

I like to think of intuition as the culmination of one’s entire lifetime of experiences, plus the three billion or so years that genetic evolution conferred, from the times of the first microbes to the first hominids. A famous anecdote was said about Picasso:

“Picasso’s Napkin:

The story goes something like this:

Picasso was having a drink in a restaurant – probably a traditional French pavement cafe.

The admirer recognised him and exclaimed “Oh my goodness, are you the famous Pablo Picasso?” the painter nodded modestly, and the admirer went on to ask the famous painter to sign her napkin.

Picasso was happy to oblige and didn’t seem to mind the interruption at all, in fact he went one step further and added a small sketch.

But as he handed over the drawing he asked for a considerable amount of money in exchange.

The admirer was horrified, “But that only took you five minutes!” she exclaimed.

Picasso leaned over, carefully took the napkin back and said “No, dear lady, that took me a lifetime.”

Of course, decision making skills can sometimes become inhibited, and do not always reflect the culmination of intelligent judgement.

Tech Demos

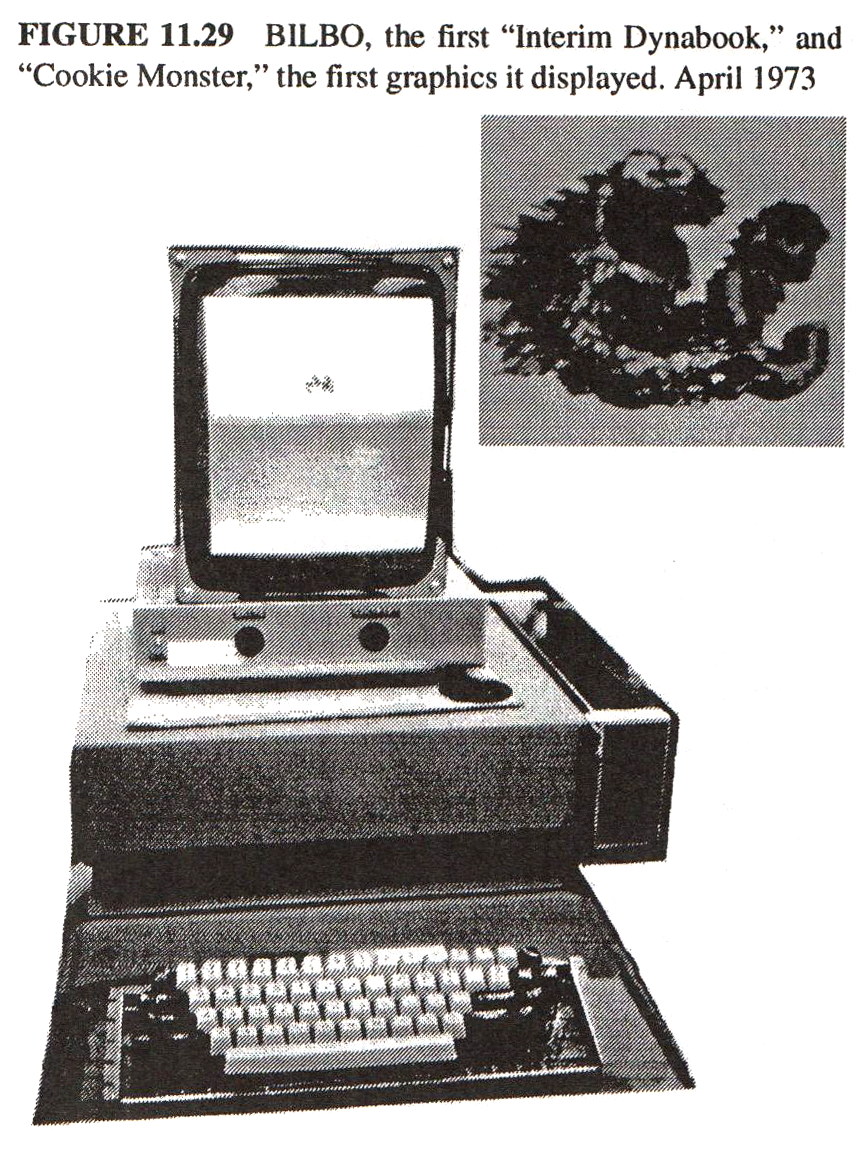

Today, cartoons are less disheveled than the motley Sesame Street crew, but Apple and IBM’s early rivalries both cherished Jim Henson characters:

“The Coffee Break Machine is a Muppet routine created for an IBM training film, and used again for sketches in various appearances.

In the original IBM film, a monster (who would eventually evolve into Cookie Monster) wanders upon a talking coffee machine that has been set in "Auto-Descriptive" Mode. As the machine describes its parts, the monster eats them. Once the machine is finished, the voice of the machine from inside the monster tells him that he has activated the anti-vandalism program, which harbors the most powerful explosives known to man. The monster instantly combusts.”

127 years ago, a cartoonist, Richard Outcault was sought out by competing newspapers:

“Homestarrunner.com is the Internet equivalent to The Yellow Kid, the comic introduced by Richard Outcault in the New York World in 1896. Outcault (a superb name for a cartoonist) was by no means the first newspaper cartoonist, but he twisted the genre and made something new of it. His lowlife street urchin in the yellow nightshirt was the Strong Bad of another era, and similarly gained a huge public following. The back-and-forth contest for Outcault’s services between Joseph Pulitzer at the New York World and William Randolph Hearst at The New York Journal is memorialized in the expression “yellow journalism.” The Chapmans have similarly taken full advantage of flash animation and the tricks of the keyboard to create a cartoon world that really couldn’t exist in any other medium.”

from: A National Review article in 2013.

Imagine being Jim Henson, and seeing your cartoons appropriated in a contest between IBM and Apple. How would you feel? Perhaps cooler if one received an appropriate compensation. But in a laboratory, with a handful of scientists, businessmen, and perhaps, a gaggle of reporters? How does one quantize the value of content in a technical demo? Similar to “Hello World,” the value of a meme is in its intrinsic (cap)ability to communicate, rather than its outward, projected(futures) or available commercial value. No savvy salesman would use a frumpy cartoon or stick figure animation in their tech demo or marketing material. They want to surround themselves with pop culture icons that everyone can relate to. In that sense, ideas precede products, not for obvious reasons, but because ideas and symbols become part of technology- the “The medium is the message.” Until it’s not-perhaps the medium or message no longer becomes fashionable. But that is a different discussion needing its own post (Maybe some other day).

“The strip's popularity drove up the World's circulation and the Kid was widely merchandised. Its level of success drove other papers to publish such strips, and thus the Yellow Kid is seen as a landmark in the development of the comic strip as a mass medium.[1]”

Consider how both Xerox, IBM and Apple all appropriated Muppet characters in their demos. PBS is a public corporation, but they still believe in copyright rather than public domain. Obviously there is little to nothing lost in trademark infringement when the product promotes, rather than competes, with a product. Détournement, on other hand, can subvert a product’s stature and even its stock price. Recent examples of campaign rallies using artists music pose an interesting question on the definition of fair use as it related to the marketability of their product:

“Artists may reasonably argue that a particular politician’s use of their copyrighted work at an event may adversely affect the future marketability and/or value of their work, constituting a violation of fair use.”

Perhaps no campaign other than Apple’s 1997 “Think Different” marketing ads appropriated a larger amount of (debatably) public domain content (some with the permission of neighbors, such as Yoko Ono, being in opportune [high] places).

In a lot of ways, the funny papers, is like a tech demo for newspapers- they are able to transmit more information to wider audiences- all ages, while also communicating condensed political commentary in a shorter segment of print. The mass-production of papers-the lessened cost to produce it, certainly benefited from the services of a relatively inexpensive cartoonist.

Returning to Jobs’s commencement speech, I wanted to comment on a similar feeling I had, although not related to be able to complete college, but graduate school. In a lot of ways, I think I obtained a greater education that Jobs- I did not have to worry about tuition, and I was far more fortunate. However It’s not really possible or practical to compare oneself to another. Jobs was adopted; I was not. Jobs insisted he attend a safer middle school- my middle school wasn’t the best, but I do not know if that would have made a difference. I regret not being accepted to an accelerated math class in the eight grade. A recurring comparison I’ve tried to make, though, is that a college degree in 1970 was worth about the same as a PhD today. Yet, many successful businessmen dropped out of college- Bill Gates, Mark Zuckerberg. Jobs stayed for one semester but hung around unofficially for 3 more. Gates dropped out after three semesters, and Zuckerberg in his sophomore year. These were businesspeople destined to be CEOs, and may not have seen themselves as subservient employees for the long term. Jobs and Wozniak initially pitched their Apple computer to Atari and HP, thus they did not intend to become CEO and CTO until they realized they had no other choice (mentioned in video interview below) Thus, dropping out is an option for entrepreneurs if they believe they acquired all the knowledge they need before embarking on the often lonesome quest for titanic businesses (Note: Brian May obtained his PhD at 60, after his rock star fame).

“ The first story is about connecting the dots.

I dropped out of Reed College after the first 6 months, but then stayed around as a drop-in for another 18 months or so before I really quit. So why did I drop out?

It started before I was born. My biological mother was a young, unwed college graduate student, and she decided to put me up for adoption. She felt very strongly that I should be adopted by college graduates, so everything was all set for me to be adopted at birth by a lawyer and his wife. Except that when I popped out they decided at the last minute that they really wanted a girl. So my parents, who were on a waiting list, got a call in the middle of the night asking: “We have an unexpected baby boy; do you want him?” They said: “Of course.” My biological mother later found out that my mother had never graduated from college and that my father had never graduated from high school. She refused to sign the final adoption papers. She only relented a few months later when my parents promised that I would someday go to college.

And 17 years later I did go to college. But I naively chose a college that was almost as expensive as Stanford, and all of my working-class parents’ savings were being spent on my college tuition. After six months, I couldn’t see the value in it. I had no idea what I wanted to do with my life and no idea how college was going to help me figure it out. And here I was spending all of the money my parents had saved their entire life. So I decided to drop out and trust that it would all work out OK. It was pretty scary at the time, but looking back it was one of the best decisions I ever made. The minute I dropped out I could stop taking the required classes that didn’t interest me, and begin dropping in on the ones that looked interesting.”

What’s clear is that Jobs didn’t just hoard money but hoarded memory and information. He would not have been as successful as he was if he did not try to find a use for many past experiences or memories. “Offloading” them is not always a practical solution. It seems the best way to retain important memories is to revisit them as frequently as possible, to seek to form new synapses to similar or current observations, even if arbitrary or impractical. Like renewing a library book even if one isn’t planning on reading it. An extension to a bookmark, perhaps long enough to find a use for it later on, or for “offloading “ -archival techniques that may get saved by others as well.

A funny Simpsons quote is when Homer says, “Every Time I Learn Something New It Pushes Some Old Stuff Out Of My Brain.”

Another episode, which I do not recall ever watching (no pun intended), suggests Homer has a photographic memory:

I think polymaths rely on a strong memory hoarding tendency. They also might have what is called a “photographic memory.”

But these skills are not exactly transferrable, let alone listable on a resume:

https://blogs.scientificamerican.com/observations/advice-for-young-scientists-be-a-generalist/

“By now, decades after securing a tenured appointment, my advice to postdocs is different from the one I had received early in my career. I still admit that it is important to demonstrate excellence by developing a unique set of skills for solving specialized problems. But this skill set should be supplemented by a broad knowledge base. A foundation of general education enables wide maneuvers and unexpected discoveries. It provides the tools needed to venture into unexplored territories or to correct misguided specialists based on general principles.”

In Jobs’ commencement speech, he uses the word “dogma.”

“Your time is limited, so don’t waste it living someone else’s life. Don’t be trapped by dogma — which is living with the results of other people’s thinking.”

No other computer salesman more than Jobs upended the dogma of computers as products in themselves for the technically savvy:

I was also fortunate to have lasted 9 semesters of higher education. In my eighth, I took a class by a professor who successfully challenged one the prevailing dogma of the era:

“In 1977, Carl Woese overturned one of the major dogmas of biology. Until that time, biologists had taken for granted that all life on Earth belonged to one of two primary lineages, the eukaryotes (which include animals, plants, fungi and certain unicellular organisms such as paramecium) and the prokaryotes (all remaining microscopic organisms). Woese discovered that there were actually three primary lineages. Within what had previously been called prokaryotes, there exist two distinct groups of organisms no more related to one another than they were to eukaryotes. Because of Woese’s work, it is now widely agreed that there are three primary divisions of living systems – the Eukarya, Bacteria, and Archaea, a classification scheme that Woese proposed in 1990.”

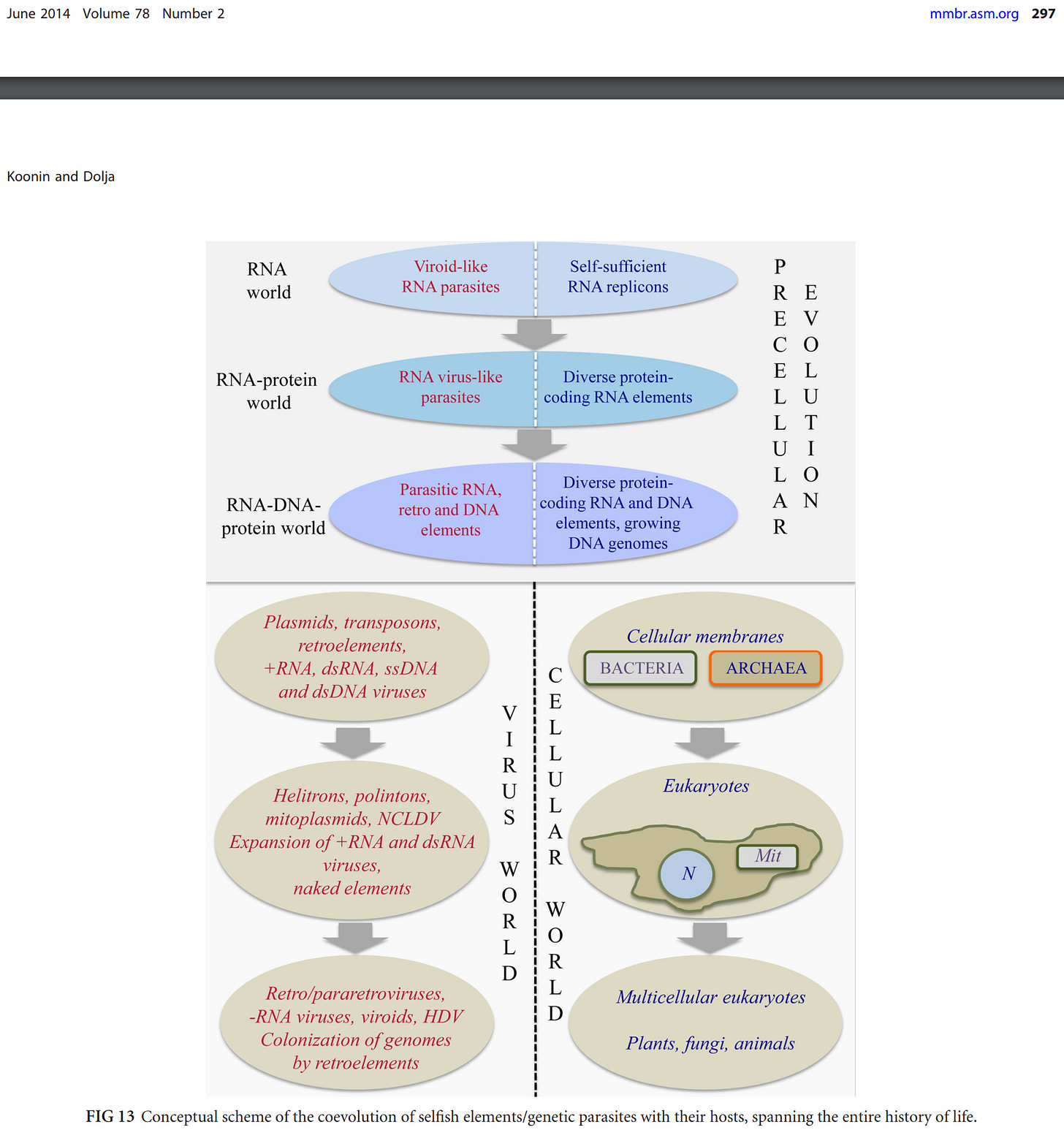

It is very possible that remnants of a “fourth” domain of “life” (in quotes because some do not define viruses as life, yet carried elements of shared genes"- the definition is less important than its features-all of which are subject to the scientific method)) may be uncovered one day- perhaps a viral/RNA domain, as suggested by Koonin et al (2014):

What is true, then, is whenever scientific revolutions happen, prevailing dogma, even that of cherished former professors, is challenged and gets overturned.

A level-headed scientist does not look to overturn an assumption for the sake of causing a stir. This would be the wrong way to go about doing anything. However, those with natural curiosity have a propensity to test and “find out.”

Avoid TikTok whenever possible (despite my embedding of source)

A chemist that my Dr. Woese spoke highly of, Fred Sanger, talked modestly about his accomplishments, "just a chap who messed about in a lab." It would not be surprising that the choice of words “mess” was used in lieu of another word embedded above.

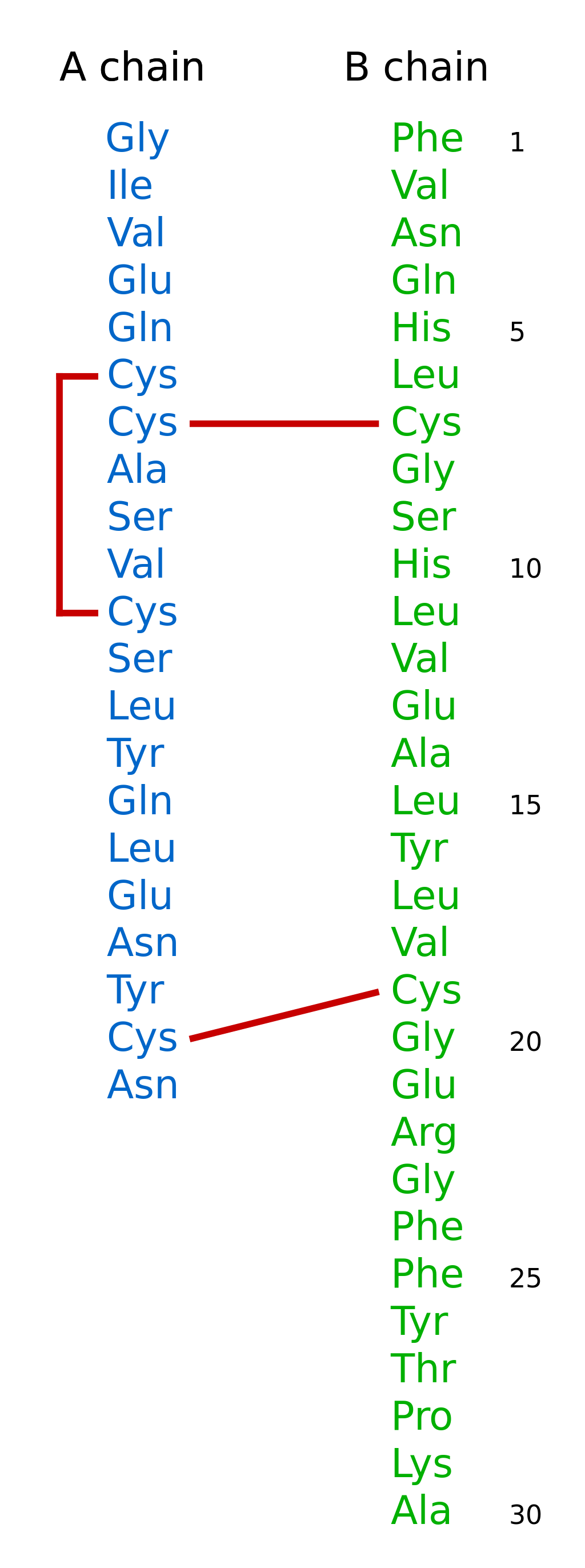

To appreciate Sanger is to understand that his accomplishments were both high tech for their time, and extraordinarily low-tech by today’s standards. In 1951, he sequenced the amino acid chains of insulin:

He sequenced RNA in 1965, although was not the first, and in 1977 sequenced DNA:

“In 1977 Sanger and colleagues introduced the "dideoxy" chain-termination method for sequencing DNA molecules, also known as the "Sanger method".[27][29] This was a major breakthrough and allowed long stretches of DNA to be rapidly and accurately sequenced. It earned him his second Nobel prize in Chemistry in 1980, which he shared with Walter Gilbert and Paul Berg.[30] The new method was used by Sanger and colleagues to sequence human mitochondrial DNA (16,569 base pairs)[31] and bacteriophage λ (48,502 base pairs).[32] The dideoxy method was eventually used to sequence the entire human genome.[33]”

Dr. Woese used Sanger’s RNA sequencing techniques, for nearly 10 years, from 1967-1977. The RNA method was crucial to the discovery of the archaea. But another major discovery happened in 1983: PCR.

“The heat-resistant enzymes that are a key component in polymerase chain reaction were discovered in the 1960s as a product of a microbial life form that lived in the superheated waters of Yellowstone's Mushroom Spring.[81]

A 1971 paper in the Journal of Molecular Biology by Kjell Kleppe and co-workers in the laboratory of H. Gobind Khorana first described a method of using an enzymatic assay to replicate a short DNA template with primers in vitro.[82] However, this early manifestation of the basic PCR principle did not receive much attention at the time and the invention of the polymerase chain reaction in 1983 is generally credited to Kary Mullis.[83]

When Mullis developed the PCR in 1983, he was working in Emeryville, California for Cetus Corporation, one of the first biotechnology companies, where he was responsible for synthesizing short chains of DNA. Mullis has written that he conceived the idea for PCR while cruising along the Pacific Coast Highway one night in his car.[84] He was playing in his mind with a new way of analyzing changes (mutations) in DNA when he realized that he had instead invented a method of amplifying any DNA region through repeated cycles of duplication driven by DNA polymerase. In Scientific American, Mullis summarized the procedure: "Beginning with a single molecule of the genetic material DNA, the PCR can generate 100 billion similar molecules in an afternoon. The reaction is easy to execute. It requires no more than a test tube, a few simple reagents, and a source of heat."[85] DNA fingerprinting was first used for paternity testing in 1988.[86]”

Hypothetically, let’s say Dr. Woese did not have a curiosity to test Darwin’s Origin of the Species:

“One needs look no further than the “doctrine of common descent” to find a candidate; common descent is something that essentially all modern biologists have taken for granted. Where did this doctrine come from? Why, Darwin, of course: didn't he say that all life stems from a single primordial form? Indeed he did. But look at the context and way in which Darwin addresses the issue in Origin of Species. Herein we read (12): “… [we may infer] that all the organic beings which have ever lived on this earth may be descended from some one primordial form. But this inference is chiefly grounded on analogy and it is immaterial whether or not it be accepted. No doubt it is possible, as Mr. G. H. Lewes has urged, that at the first commencement of life many different forms were evolved; but if so we may conclude that only a very few have left modified descendants.”

That doesn't sound like doctrine to me! Darwin was merely speculating about ultimate origins—a great gap in our knowledge and something to be defined and resolved when the time came. For Darwin, common descent was an open question, an invitation to discussion. What elevated common descent to doctrinal status almost certainly was the much later discovery of the universality of biochemistry, which was seemingly impossible to explain otherwise (49). But that was before horizontal gene transfer (HGT), which could offer an alternative explanation for the universality of biochemistry, was recognized as a major part of the evolutionary dynamic.

In questioning the doctrine of common descent, one necessarily questions the universal phylogenetic tree. That compelling tree image resides deep in our representation of biology. But the tree is no more than a graphical device; it is not some a priori form that nature imposes upon the evolutionary process. It is not a matter of whether your data are consistent with a tree, but whether tree topology is a useful way to represent your data. Ordinarily it is, of course, but the universal tree is no ordinary tree, and its root no ordinary root (61). Under conditions of extreme HGT, there is no (organismal) “tree.” Evolution is basically reticulate.”

Or, let’s say he did have a curiosity, but did not want to spend 10 years sequencing RNA using Sanger’s method. It was tedious. In 1967, Dr. Mullis’s PCR did not yet exist (Let’s also remember that the relatively modern and quicker PCR sequencing method for rRNA for several species also did not exist in 1983, which occurred after DNA PCR).

If Woese waited until automated PCR techniques existed for RNA, such as RNA-Seq, it is very likely he could have achieved the same discovery in 1-2 years. But that isn’t the point of this essay. There was no way of knowing PCR would be 16 years away in 1967. There was no waiting on others to accomplish anything, because all the tools he needed were available to him. Without testing the dogma, as a scientist, the industry would have completely solidified into an edifice. It’s possible that his open minded thinking, led with colleague’s interest in the methanogens, the same domain that many thermophilic bacteria are found in, contributed to the search for the Thermus aquaticus (not an archaea though) that Taq polymerase was extracted for in PCR:

“The discovery in 1976 of Taq polymerase—a DNA polymerase purified from the thermophilic bacterium, Thermus aquaticus, which naturally lives in hot (50 to 80 °C (122 to 176 °F)) environments[14] such as hot springs—paved the way for dramatic improvements of the PCR method. The DNA polymerase isolated from T. aquaticus is stable at high temperatures remaining active even after DNA denaturation,[15] thus obviating the need to add new DNA polymerase after each cycle.[3] This allowed an automated thermocycler-based process for DNA amplification.”

“Sanger's rule

... anytime you get technical development that’s two to threefold or more efficient, accurate, cheaper, a whole range of experiments opens up.[37]

“This rule should not be confused with Terence Sanger's rule, which is related to Oja's rule.”

Sanger also opened up a whole range of experiments to other scientists. For Dr. Woese, 10 years may not have sounded like a lot to a young, tenured professor, since he could research without much fear of needing to produce results. I recall reading an anecdote that he had a stack of unopened mail on his desk, including some from his administration inquiring of curricula) while he would work 9-5 on his research.

The cheaper technical accessibility of PCR was not necessary to produce the evolutionary discoveries in 1977. They would be needed, though to advance RNA-“omics” research in the study of pre-biotic, evolutionary viruses. Statistical calculations of sub-enzyme domains, such as the PolD domain, and/or using computational methods of conserved evolution will also represent a new era of evolutionary biology.

I didn’t anticipate this essay would steer this far into technical biology, but I think it’s important not to understate that Jobs was both a scientist and an engineer, and not just a “designer, ”even though many do not see him that way. He more a scientist who tested the dogma than one who worked on variations of an issue within a sub-domain. It is not to say that “small research topics” are not important - in Dr. Woese’s case, he was working on “invisible microorganisms,” as a microbiology instructor would call them when teaching about society’s early disbelief that germs caused disease (The pre-Koch era, called Miasma theory, in the context of SARS-CoV-2, isn’t possible to fully dismiss, with a renewed holistic approach to the environment, instead of a singularly reductionist one- a fundamentally reductionist one, that is- see reductionism vs. reductionism section).

I also wanted to wrap up this essay by referencing the title, “Most ideas come from previous ideas.” Some ideas are just applying old concepts to new platforms or technologies. Ideas also co-evolve, both in parallel- for long periods, and incongruously, eventually intersecting in a competitive environment. Memes evolve just like genes and software does. Occasionally they deconverge. Is it the Descent of Man or the Ascent of Man? To a Newtonian physicist, perhaps only absolute displacement matters. To make an analogy, a reductionist Newtonian, living in the 20th century, might only be interested in the end result of a traceroute- a ping latency, or a pass/fail result, which might be coded into a replication machinery of a biological cell. A non-reductionist Newtonian might be interested in the log files of all the hops in a tracert. It doesn’t upend Newtonian physics; it merely suggests not all Newtonians are fundamentalist reductionists. All extant cells do not necessarily carry log files of early era evolutionary history, but only the replicated fragments.

History is all about framing the story. Historians can shape and make a story as much by omission as by inclusivity. This post was an attempt to juxtapose historical facts in a new and unique way. The hypothesis is that selecting highly specific contextual frameworks can recalibrate the perspective of “established” historical analysis.